Open Source Data Observability and DataOps Software

Stop Embarrassing Data Quality Problems.

Find Errors In Your Entire Data Journey.

KEY CUSTOMERS:

Data Observability Software: When ‘Failure Is Not An Option.’

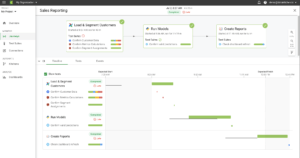

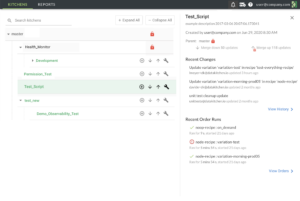

DataKitchen provides software to observe and validate every data journey in an organization, from source to customer value, in development and production, so that data teams can deliver insight to their customers with virtually no errors and a rapid rate of new insight creation.

Our DataOps software allows data and analytic teams to observe complex end-to-end processes, generate and execute tests, and validate the data, tools, processes, and environments across their entire data analytics organization. This provides massive increases in quality, cycle time, and team productivity.

Data Journey Reliability. Delivered.

Data breaks. Servers break. Your toolchain breaks. We ensure your team is the first to know and the first to solve with visibility across and down your Data Journey.

Reduce Errors to ZERO

Win the trust and confidence of your business customers by eliminating errors in your analytics. What’s more, less time spent on unplanned work means more time spent on innovation.

.

Find Problems Before Your Customers

Stop the embarrassment of incorrect data, dashboards, models, or even just being late. Stop wasting time on data fire drills. Protect yourself from data provider ineptitude.

Preventing Problems From Happening Again

Observing the entire data journey can help you spend less time worrying about what may go wrong and more time creating by permanently preventing problems from happening again.

Open Source Data Observability

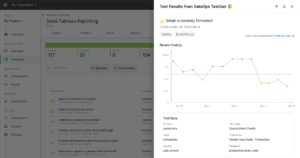

Two full-featured Apache 2.0 licensed Data Observability products that examine new data for quality issues, continuously find data anomalies, monitor product tools and pipelines, and enable development testing across your entire data architecture.

Start Improving Your Data Quality Validation and DataOps Today!

DataOps Consulting Services

Not sure how to get started? Would you like regular conversations with a DataOps coach?

Could your team benefit from DataOps training?

Everybody wants the benefits of DataOps – delivering faster and with fewer errors.

But getting started can be challenging and sometimes the impediments are cultural or organizational.

DataKitchen’s DataOps Consulting Services provide concrete, actionable steps for success.

Choose from different service offerings or customize a program to meet your organization’s needs and enjoy the benefits of DataOps.

Why Use DataKitchen’s Software?

“We realized dramatics cost savings and also capitalized on opportunities better because we were more efficient and could do things more quickly.”

What’s Cooking at DataKitchen

Why We Open-Sourced Our Data Observability Products

Why open source DataOps Observability and DataOps TestGen? Our decision to share full-featured versions of these products stems from DataKitchen’s long-standing commitment to enhancing productivity for data teams and promoting the use of automated, observed, and trusted tools. It aligns with our company’s philosophy of sharing knowledge and now software to inspire teams to implement DataOps effectively.